In this blog post, I am going to show you how you can quickly add a very basic Date table to your model. You might be wondering – why do you need a Date table? A Date table is very important to be able to use Time Intelligence DAX functions such as year to date, month to date, quarter to date, same period last year, parallel period, and many other similar calculations that are based on the time/period. There are approximately 35 built-in time intelligence DAX functions and we can leverage them by adding a Date table to our model.

The basic feature of the Date table is that you need continuous dates in your table. There is only unique date value, and on top of it you can add other columns, like Year, Month, Weekday, etc. based on your requirements of how you want to slice and dice the data. Some people may have a requirement to slice the data by Week Number (of the year) and others might not need this column as they would never use it.

From my experience, I have found that the following are key columns to include in your Date table. These are used quite frequently:

- Date (this is the basic feature in the Date table, both the continuous date and unique values)

- Year

- Quarter

- Month Name

- Month Number

- Month Year

So, what should the start and end date be in the Date table? Usually, you want to create a Date table from the start of the year (January 1st) , based on first date in your fact table. For example, if your first sale is on February 15, 2019 in your fact table then your Date table should start from January 1, 2019. The last date should be at least December 31 of the current year and if you have future date transactions in your model then the last date should be based on last date in your transaction table. Let’s assume that you have a transaction table which has future date transactions – let’s say some in 2021 and some in 2022. In this case, our last date for our Date table will be December 31, 2022. There is no problem with having dates in the future.

Let’s look at some examples of what the date range would be in the Date table at the time of writing this post (May 20th, 2020):

| Earliest date in transaction table | Last date in transaction table | Date ranges for Date table |

|---|---|---|

| February 15, 2018 | January 5, 2019 | January 1, 2018 – December 31, 2020 |

| June 20, 2019 | May 31, 2021 | January 1, 2019 – December 31, 2021 |

| January 13, 2020 | December 31, 2023 | January 1, 2020 – December 31, 2023 |

Now, how do we create a Date table? We have two DAX functions available to create this table: CALENDAR and CALENDARAUTO. The main difference between these two function is that you pass the date range to the CALENDAR function whereas the CALENDARAUTO function looks at your data model and creates a date range based on the minimum and maximum date available in the model. The latter is exactly what we’re looking for.

Assume that I have a Sales table in my model and that the first sales date is on April 3rd, 2017. There is also a future sales date on November 30, 2022. We will use the CALENDARAUTO function to create a Date table and add other basic columns like Year, Month, Quarter etc.

Click the Calculated Table icon in the Modelling tab to add the DAX expression to create a Date table.

Simply copy the below code to create the Date table in your model

Date =

//start date in my case I'm setting to 2010

//you can get date from your transaction table and start from that year, something like this

//in __startDate replace DATE (2010, 1, 1 )

//with -> DATE ( YEAR ( MIN ( YourTransactionTable[DateColumn] ) ), 1, 1 )

VAR __startDate = DATE ( 2010, 1, 1 )

VAR __endDate = DATE ( YEAR ( TODAY() ), 12, 31 )

VAR __dates = CALENDAR ( __startDate, __endDate )

RETURN

ADDCOLUMNS (

__dates,

"Year", YEAR ( [Date] ),

"Month Number", MONTH ( [Date] ),

"Month Name", FORMAT ( [Date], "MMMM" ), --use MMMM for full month name, January instead of Jan

"Month", FORMAT( [Date], "MMM, YYYY" ), --use MMMMM for full month name, January instead of Ja

"Month Sort", FORMAT( [Date], "YYYY-MM" ),

"Quarter", "Q" & FORMAT( [Date], "Q, YYYY" ),

"Quarter Sort", FORMAT ( [Date], "YYYY-Q" )

)With the above DAX expression, we get a Date table with the following columns:

Now let’s check the date range we have in our Date table against what we have in our Sales table. I created MIN and MAX measures on the Date columns from both tables and depicted the results in the visual below. We can clearly see that our Date table has a date range from January 1, 2017 to December 31, 2022 and that the first date of sale is April 3, 2017 and the last date of sale is November 30th, 2022. The date range in our Date table clearly aligns with this.

Another good feature of using the CALENDARAUTO function is that we don’t need to worry about changing the date range. As new data comes in when we refresh our Sales table, the date range for the Date table will automatically grow based on the dates in the Sales table.

One important aspect after we create the Date table is to mark this as a “Date Table.” I don’t want to go into too much detail about this, but you can read all about it here. Once we have marked our Date table, we are going to set the sorting of our columns so that when we visualize our data, our values show up correctly. For example, if we don’t sort our Month Name column then everywhere we use Month Name it will be sorted alphabetically. This means that April will shows up as the first month instead of January. I also want to hide any unwanted columns – in this case I’m going to hide the Month Sort and Quarter Sort columns because they are only used for sorting purposes but are never going to be directly used in the visualization.

You can read more about sort by column here.

The following table lists all the columns that we are going to sort and what columns we are going to sort them by.

| Column Name | Sort by Column Name |

|---|---|

| Month Name | Month Number |

| Month | Month Sort |

| Quarter | Quarter Sort |

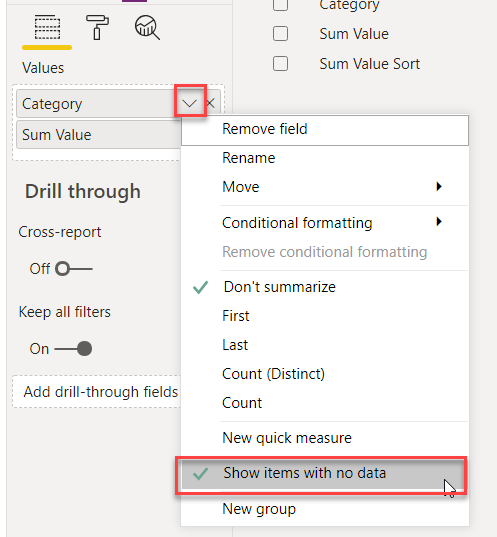

Something else I always do is change the aggregation method of any number columns to “Don’t Aggregate.” In our case, Year and Month Number are showing as aggregated columns and we know that we will never have a Sum of the Year column or the Month Number column.

One last thing – make sure you create a relationship between the Date column from your other tables to the Date table. In this case, the Date column from the Sales table to the Date column from the Date table. Now you can use the new Date table for all your Time Intelligence measures!

As best practice, always add a Date table in your model